ComfyUI and Touch Designer are both pretty powerful, node-based, toolkits that allow the development of complex workflows. With TouchDesigner as …

ComfyUI and Touch Designer are both pretty powerful, node-based, toolkits that allow the development of complex workflows. With TouchDesigner as main visual processing tool and ComfyUI as AI workflow controller, we have a pretty neat combination to design and play around with generative AI.

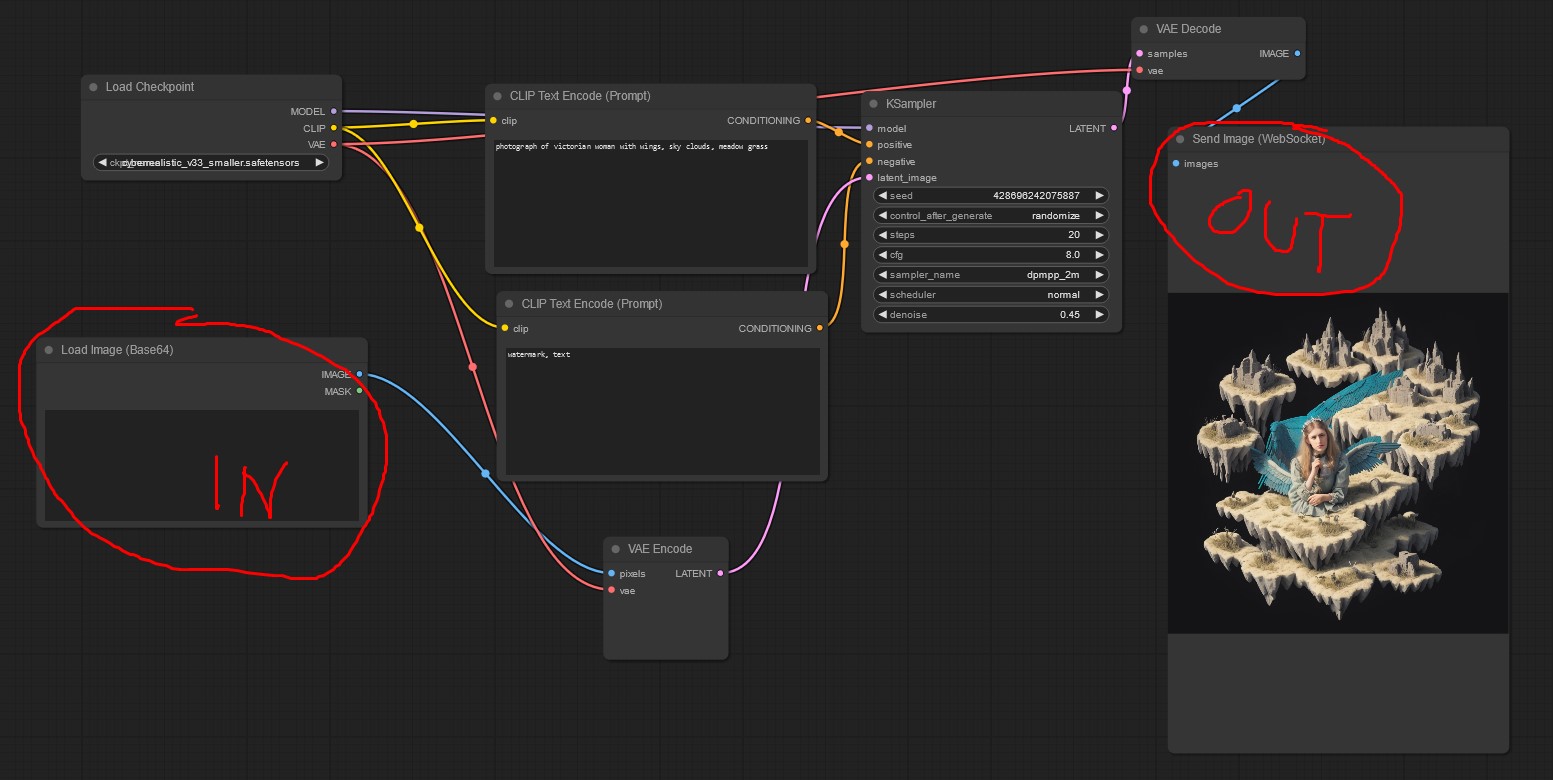

COMFYUI WORKFLOW

The ComfyUI toolsets are mainly use as some kind of rendering engine in this project. We preprocess realtime camera input and send this image data as base64 string to the comfyUI input node. Processing is done and the result are sent back to Touch Designer via websockets.

DOWNLOAD: COMFYUI_TO_TD_WORKFLOW.JSON

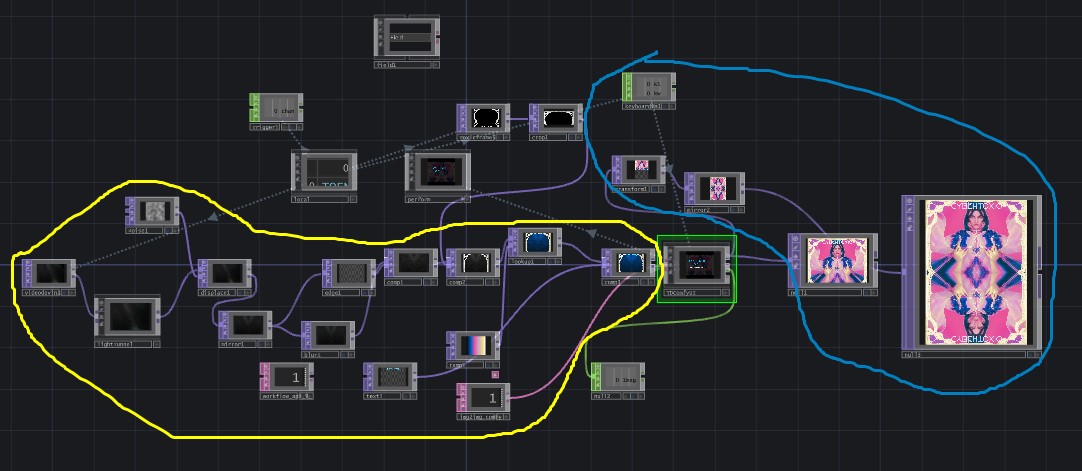

TOUCH DESIGNER WORKFLOW

Touch Designer is the hosting toolkit for this project, as we operate pre- and postprocessing with it. Raw video footage is preprocessed filtered, mirrored, colorgraded and added with static graphic overlays – then fed into the comfyUI interface. The results from comfyUI are processed again to gain custom aesthetics.

DOWNLOAD TD_COMFY_WORKFLOW.TOE

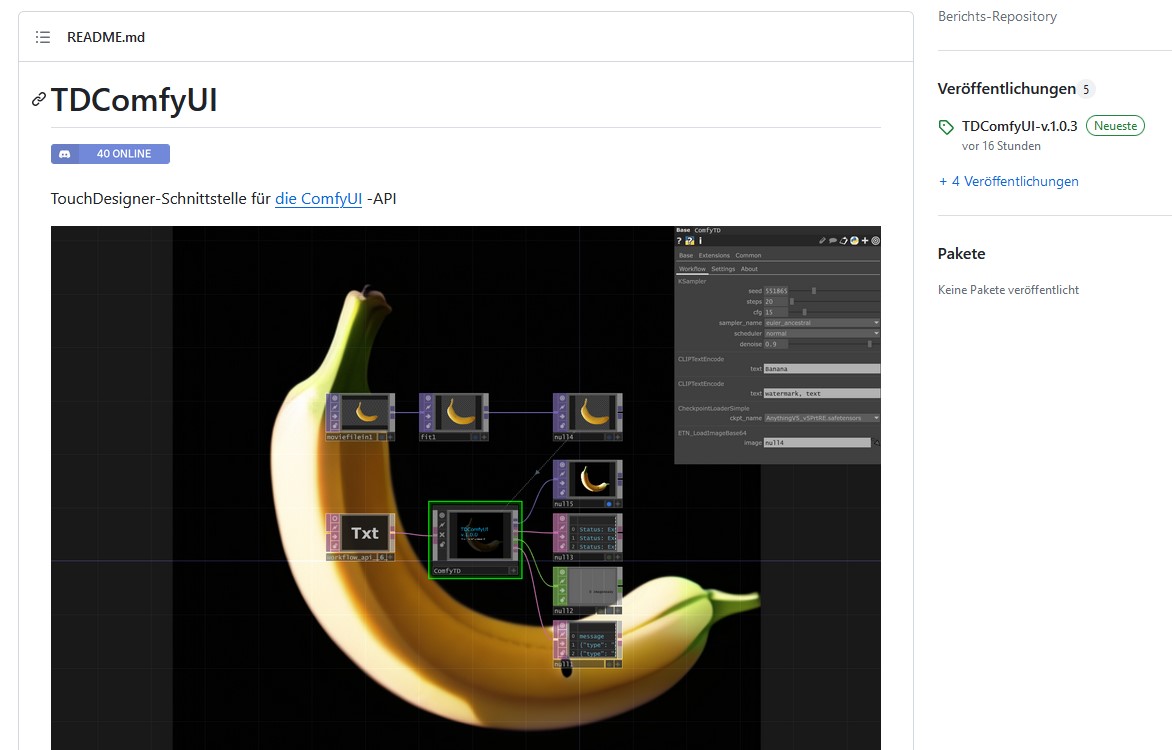

SOURCES

Please check out the tookit i used for this workflow by OLEG CHOMP!